AR glasses should focus on mobility and realtime speech-2-context as a GTM

Meta's new AR glasses represent impressive technological and design achievements, becoming both powerful and stylish. However, I believe they're missing significant opportunities in terms of novel use cases, which will likely hinder their adoption.

The path to widespread AR glasses adoption faces two critical barriers. First, society will need considerable time to normalize the sight of people wearing smart glasses constantly (and lets not mention privacy nightmares waiting to happen). Second, and more importantly, current AR glasses largely replicate smartphone functionality, which raises the question: why would people adopt them?

We've already accepted smartphones as intermediary devices that mediate our social interactions, but crucially, phones can be put away or ignored discretely. AR glasses present a fundamentally different challenge. When someone receives a call or flood of WhatsApp messages while wearing AR glasses, their distraction becomes immediately visible to whoever they're speaking with face-to-face. Unlike phone notifications that remain hidden in a pocket, AR interactions happen literally before our eyes, during direct eye contact.

This creates an unprecedented social dynamic where digital interruptions can no longer be managed privately. Until AR glasses offer genuinely unique value propositions beyond smartphone replication, I don't see society readily accepting this new layer of visible digital distraction in our interpersonal interactions.

But… that’s why we should look for new use cases that could directly make sense, significantly lower the barrier for adoption and make people less on the defensive. This morning, a great use case came along where Disneyland gives visually impaired individuals the chance to understand their surroundings better, in a natural way by the use of the camera build in on ar glasses, in effect, creating a low friction, socially excepted, adoption of new technology.

But this example and others that focus on the ‘explanatory’ functions of ar glasses, just won’t stretch that long. You can (and probably will) ask a lot about your environment in the same way you search on your phone when you want to know something. And searching through images is more novel but less broad in possibilities.

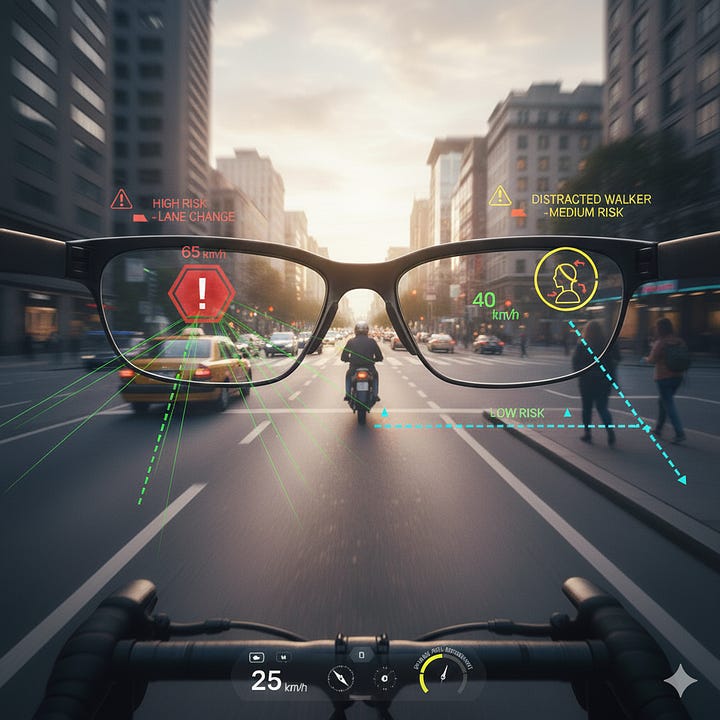

Another very interesting use case I would argue lies within mobility, where there are a few things naturally coinciding that you would definitely understand the benefit, has low friction, is socially accepted and already adopted: biking, motorbiking, … .

You could create useful (new) functions (rear mirror, spotting danger, show routes)

The time spent on the glasses is long enough to have the benefit but not too long that you would wonder why you are still wearing them

It is not a weird place to wear glasses so more people get accustomed to it (think mountainbike, sunny day, … )

it makes it more safe driving around on your bike

It is absolutely a place where handsfree augmentation feels like a real win since you need your hands on the steer

And an other domain that could definitely make sense is speech-2-context.

Processing your inner thoughts, conversations, announcements in train stations, theater, … could offer a constant stream of voice and sound, ready to be interpreted, in realtime, just like humans do. And if then, magically, you get an infinite canvas on which all these different angles of understanding plot in front of your eyes, well then, you have yourself a unique experience, maybe even a new paradigm in the evolution of computers: a realtime, contextual driven, infinite multimodal canvas.

Ephemerical yet always there.